How do I Optimize my Website so it is Easy to Crawl? When you put data on the internet it is very hard to protect it. Bots that do not play by the rules and want your data.Īlthough there are some advanced techniques for dealing with these bad bots. If they built a bot that ignored the robots.txt file there would be an outcry. After all, reputable companies have built these bots. Now nothing is stopping these bots from ignoring the file but, they won't. In the above it allows Googlebot and disallows all other bots. This is an example of what a robots.txt file looks like: This file contains a set of rules that bots will follow when they visit your site. It is a special file that you can add to your website usually found at the root of your website: After all, do you want to give your data to all these bots? With so many bots crawling the internet it is a fair question to ask. You may be wondering can they all crawl my site? Can Crawlers always Crawl my Site? Built for different purposes by companies and individuals. Uptimebot - Used by the service to track if your website goes down.This is a bot that tracks the rankings of all the websites on the internet Grapeshot - This is an Oracle Data Cloud Crawler a web crawler that downloads data from the internet.SemrushBot - Another SEO company with similar products to Ahrefs.AhrefsBot - An SEO company that tracks web page rankings and more.Without the Twitterbot, this would not be possible. Now instead it can display a big card like this: Twitterbot will visit the page and grab extra information. For example, rather than showing a simple link in a tweet. So they use the bots to get this content.įor social media sites like Facebook and Twitter, these bots enhance links on their site. For search engines like Google, all their content is from other sites. These companies use the bots to add your content to their website. YandexBot - This is a Russian search engine.Baiduspider - This is a Chinese search engine.DuckDuckBot - Used by the DuckDuckGo search engine.Many websites have a bot, here are some of the most popular ones: This process repeats every day for millions of websites across the internet. Add the content to the search engine index.Try to understand what the content is about.They have a list of URLs that they visit. This is how web crawlers like Googlebot and Bingbot work. Our web crawler could then go to that website and repeat the process. It will then discover URLs or links to other websites. The process repeats as we visit the next link.Īfter some time our web crawler will have visited all the pages on. Once we have the HTML we can look for more links and save these to a list to visit later. It then downloads the HTML from Amazon and has a look for all the links.

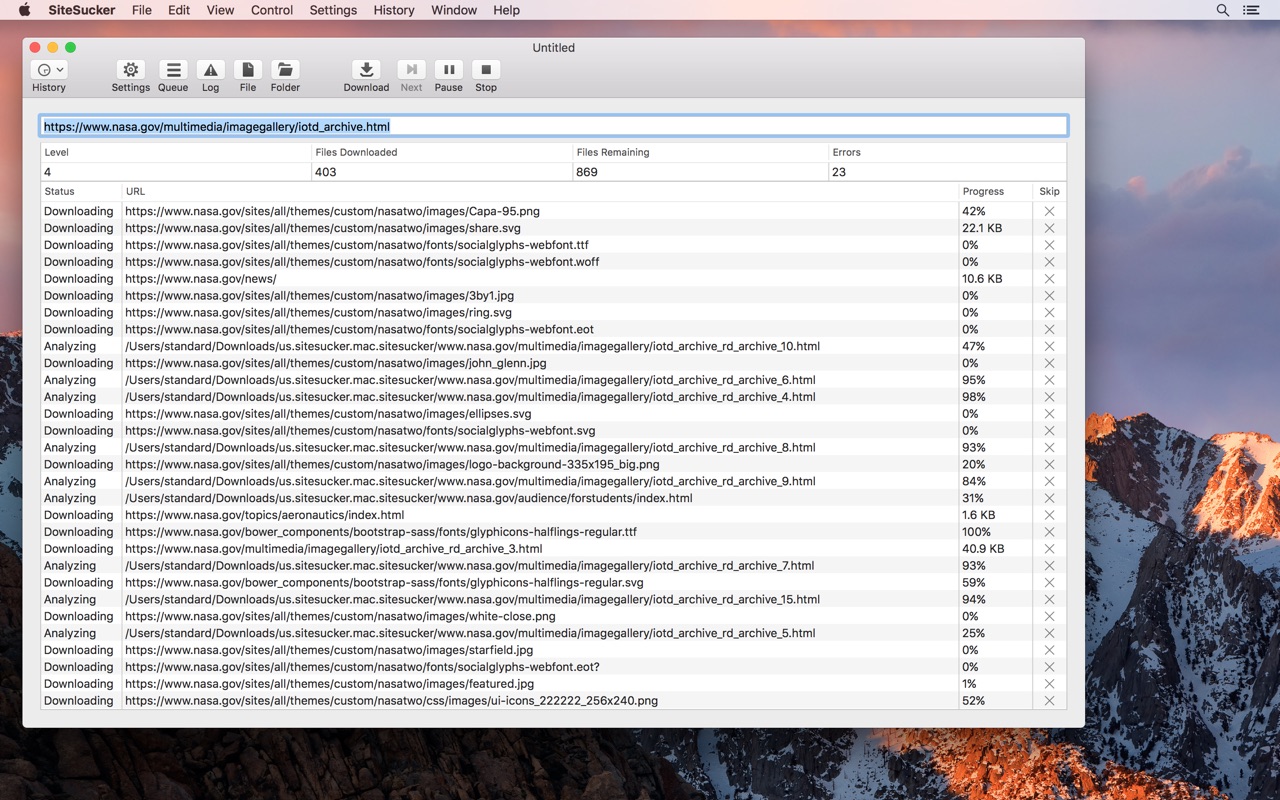

We give this URL to our web crawler and it goes and fetches the webpage. We need to start with a list of URLs that we want to target. Let's pretend that we are creating a web crawler to search the web for us. Later, we will look at how you can block some of these unwanted guests.īefore we look at that, it is good to understand how web crawlers work. Including all the HTML, images, PDFs, etc to someone's hard disk.Īnyone can install and run Sitesucker from anywhere. This is a Mac application that will download all the contents of a website. For example, there are bots like Sitesucker. Some bots are good like Googlebot, Bingbot, Facebot, and Twitterbot. Once they discover a link, they visit the page and read the web page contents. How do I Optimize my Website so it is Easy to Crawl?Ī web crawler, spider, robot or bot is software that will crawl the web by following links it finds.And how you can optimize your site for crawling. We will cover some of the basics on how you can control where these bots can go on your site. In this article, we will look at web crawlers the good and the bad ones. The index is where Google stores information about your website. Once it understands what the page is about, it will add the pages to the search engine index. Googlebot will visit your website and read the content of the page. The most popular web crawler is Googlebot. A web crawler is software built to read the contents of web pages all over the internet. Let's dive into the world of web crawlers.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed